# Navigating the New Normal: Algorithmic Model Design in a 'Higher for Longer' Macro Regime

The financial markets are in a state of profound flux, recalibrating to a macro environment distinctly different from the quantitative easing era that preceded it. For algorithmic traders, this shift is not merely a change in market sentiment; it represents a fundamental reordering of the underlying statistical properties that govern asset prices. The prevailing narrative, increasingly solidified, is one of "higher for longer" interest rates, persistent inflation, and diverging global economic trajectories [1, 4, 6]. This new normal demands a rigorous re-evaluation of how algorithmic models are designed, calibrated, and deployed. At QuantArtisan, we recognize that adaptability is not just a virtue but a necessity for survival and profitability in this evolving landscape.

The Current Landscape

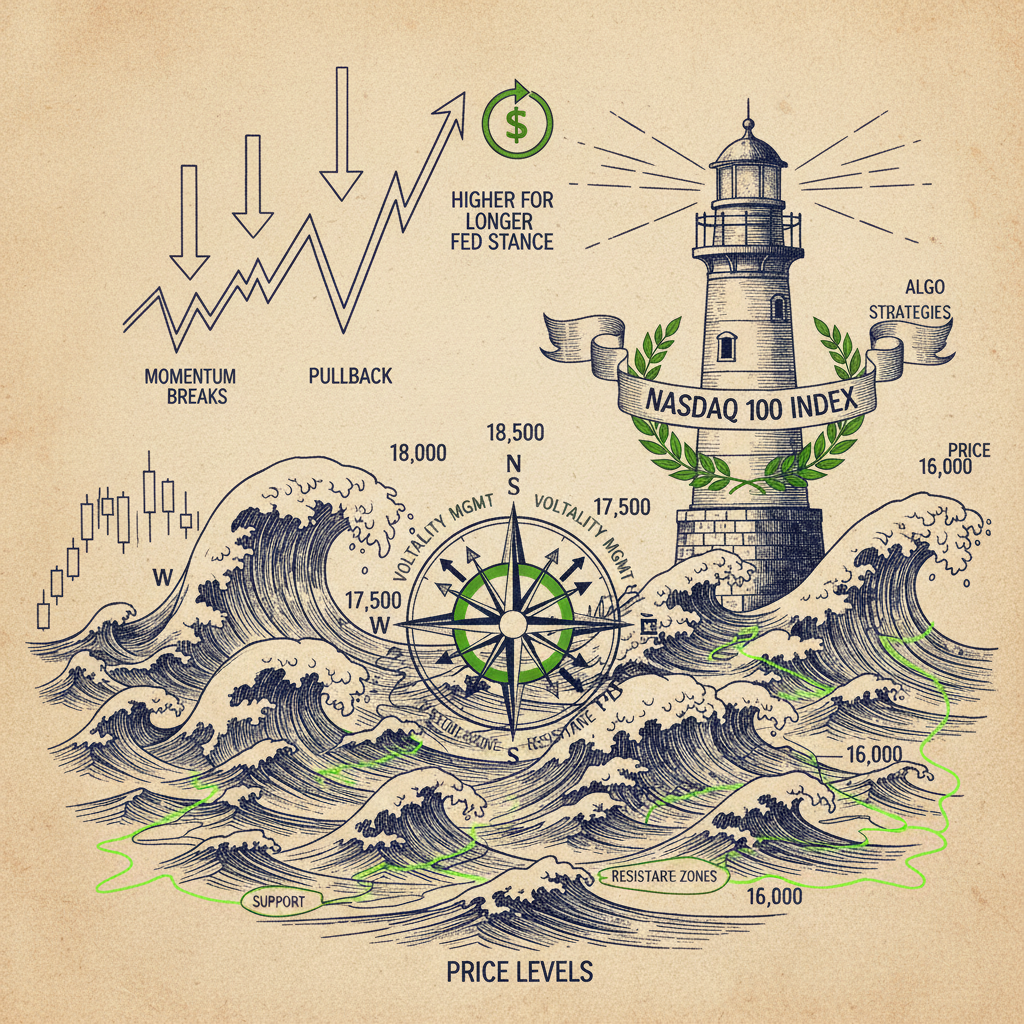

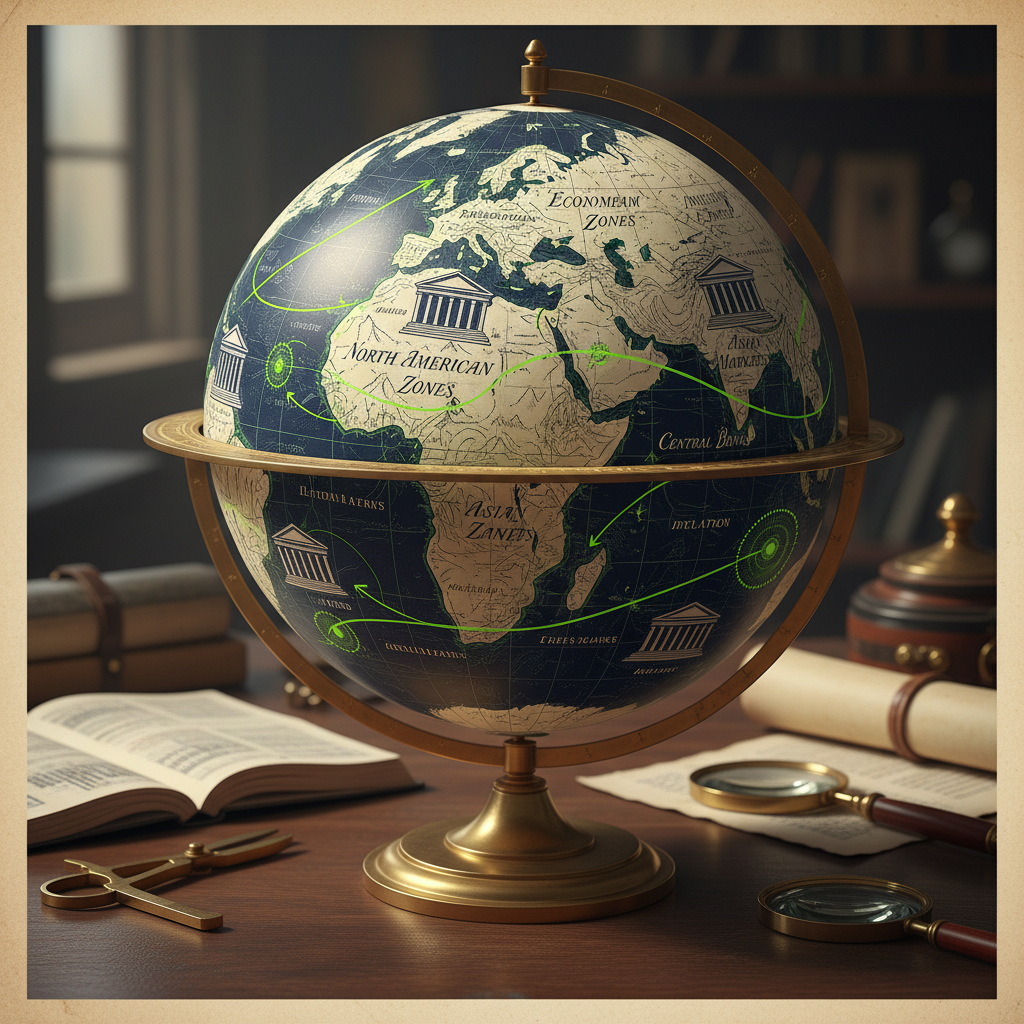

The current market environment is characterized by a confluence of factors that challenge traditional algorithmic strategies. Persistent inflation, coupled with central banks' commitment to data-dependent, often hawkish, policies, has ushered in an era where the cost of capital remains elevated for an extended period [3, 4, 6]. This "higher for longer" stance by the Federal Reserve, for instance, has profound implications across asset classes, from equity valuations to fixed income yields and currency dynamics [5]. Algorithmic traders are grappling with a complex market where concentrated equity momentum, particularly in AI-related tech stocks, has coexisted with underlying inflationary pressures, creating a nuanced and often contradictory landscape [2, 5].

This environment has been described as a "stagflation-lite" regime for 2026, where systematic traders must recalibrate their models to account for diverging global economies and the implications of sustained higher rates [4]. The resilience of the U.S. economy, even in the face of delayed Fed rate cuts, suggests that the systematic strategies of the past may no longer be optimal [1]. Instead, strategies must be agile, capable of adapting to a market where momentum can be fragile, and volatility management becomes paramount [5]. The 'NACHO' trade, for example, exemplifies how systematic strategies are adapting to persistent inflation and delayed Fed cuts, highlighting the need for innovative approaches [1].

The performance of systematic strategies, particularly trend-following CTAs, is under scrutiny as they adapt to this 2026 macro landscape shaped by central bank inflation management [3, 6]. The challenge lies in identifying and responding to these regime shifts in real-time, rather than relying on historical patterns that may no longer hold. The "higher for longer" paradigm is not a transient market anomaly; it is a structural shift that necessitates a deep understanding of its implications for model design, calibration, and risk management. This article delves into the theoretical underpinnings and practical considerations for algorithmic traders seeking to thrive in this new, demanding macro regime.

Theoretical Foundation

The concept of a "macro regime shift" is central to understanding the challenges and opportunities presented by the "higher for longer" environment. A financial regime can be defined as a period during which the underlying statistical properties of asset returns (e.g., mean, variance, covariance, correlation structure, volatility clustering) remain relatively stable. A regime shift implies a significant change in these properties, rendering models trained on previous regimes potentially suboptimal or even detrimental. The "higher for longer" regime, characterized by persistent inflation, elevated interest rates, and potentially slower growth, fundamentally alters the discount rates applied to future cash flows, the cost of leverage, and the relative attractiveness of different asset classes.

From a theoretical perspective, this necessitates a move away from static model assumptions towards dynamic, regime-adaptive frameworks. One powerful tool for this is the Hidden Markov Model (HMM). An HMM assumes that the observed market data (e.g., asset returns, volatility) are generated by an underlying, unobservable (hidden) state or regime. The model then estimates the probability of being in each regime at any given time and the transition probabilities between regimes. For example, we might define regimes such as "low inflation/low rates," "high inflation/high rates," "stagflation," or "growth." Each regime would have its own set of parameters governing asset return distributions.

Let be the hidden state at time , where is the number of regimes. The evolution of the hidden states is governed by a transition probability matrix , where . The observed market data (e.g., daily returns of a cross-section of assets) are assumed to be generated by a probability distribution , which is specific to each regime. For instance, in a "higher for longer" inflationary regime, we might expect higher average returns for commodities and value stocks, and lower average returns for growth stocks, along with higher overall market volatility and different correlation structures.

The core challenge is to estimate the hidden states and their associated parameters from the observed data. The Baum-Welch algorithm (a variant of the Expectation-Maximization algorithm) is commonly used for this. Once the HMM is trained, we can use the Viterbi algorithm to infer the most likely sequence of hidden states given a sequence of observations, or the forward-backward algorithm to compute the probability of being in each state at any given time. This allows algorithmic models to dynamically adjust their parameters, portfolio allocations, or trading signals based on the identified current regime. For instance, a momentum strategy might perform well in a "growth" regime but poorly in a "stagflation" regime, requiring a regime-dependent weighting or even a complete deactivation.

Consider a simple case where we model asset returns as following a Gaussian distribution conditional on the regime:

where is the mean return vector and is the covariance matrix for regime . In the "higher for longer" regime, we anticipate for interest-rate sensitive assets to shift, and to exhibit higher levels of volatility and different cross-asset correlations. For example, in a regime of persistent inflation and hawkish central banks, the correlation between equities and bonds might turn positive, breaking the traditional diversification benefit. This is a critical observation for portfolio construction, as the efficacy of traditional 60/40 portfolios diminishes when this negative correlation breaks down.

Furthermore, the "higher for longer" environment impacts the very nature of risk. The cost of carry for leveraged strategies increases, and the sensitivity of long-duration assets to interest rate changes becomes more pronounced. This implies that risk metrics, such as Value-at-Risk (VaR) or Conditional Value-at-Risk (CVaR), must also be regime-adaptive. A VaR calculated under a "low volatility, low rates" regime will significantly underestimate risk in a "higher for longer, high volatility" regime. Algorithmic models must therefore incorporate dynamic risk budgeting and position sizing, adjusting not just their alpha signals but also their risk constraints based on the identified macro regime. This rigorous theoretical grounding allows for the construction of more robust and adaptive algorithmic strategies.

How It Works in Practice

Translating the theoretical framework of regime-adaptive modeling into practical algorithmic trading strategies involves several key steps: data selection, model training, regime identification, and dynamic strategy adjustment. The "higher for longer" environment specifically impacts these steps by altering the characteristics of relevant data and demanding more frequent recalibration.

First, data selection is crucial. Beyond standard price and volume data, regime-adaptive models benefit from incorporating macro-economic indicators that are directly relevant to the "higher for longer" narrative. These might include inflation expectations (e.g., TIPS breakevens), real interest rates, central bank policy statements (parsed for hawkish/dovish sentiment), commodity prices (as inflation proxies), and economic growth indicators (e.g., PMI, GDP forecasts). These indicators serve as inputs to the HMM or as features in a machine learning model designed to classify regimes. For example, a persistent rise in core inflation and hawkish central bank rhetoric, as highlighted in the sources [1, 3, 6], would be strong signals for the "higher for longer" regime.

Second, model training for regime identification often involves historical data, but with a critical caveat: the training period must be carefully chosen to include diverse macro regimes, not just the recent past. Overfitting to the quantitative easing era would lead to models ill-equipped for the current environment. An HMM, for instance, would be trained on a long history of market data and macro indicators to learn the distinct statistical properties of each regime and the transition probabilities between them. The number of hidden states (regimes) is a hyperparameter that needs careful tuning, often through information criteria like BIC or AIC, or domain expertise.

Once the model is trained, regime identification occurs in real-time or near real-time. Using the latest incoming data, the model calculates the probability of the current market state belonging to each defined regime. This probability distribution then informs the algorithmic strategy. For instance, if the model assigns a high probability to the "higher for longer" regime (characterized by high inflation, rising rates, and potentially diverging economic growth), the strategy would adapt accordingly.

Finally, dynamic strategy adjustment is the core practical application. This could manifest in several ways:

- 1. Asset Allocation Shifts: Moving capital towards assets historically favored in inflationary, high-rate environments (e.g., commodities, value stocks, certain real assets) and away from those negatively impacted (e.g., long-duration growth stocks, fixed income with high interest rate sensitivity).

- 2. Parameter Tuning: Adjusting the lookback periods for momentum strategies (shorter in volatile regimes), sensitivity thresholds for mean-reversion strategies, or factors used in multi-factor models. For example, a momentum strategy might be more effective in a "growth" regime, while a value strategy might shine in a "stagflation-lite" environment [2, 4].

- 3. Risk Management: Tightening stop-loss levels, reducing position sizes, or increasing hedging activity during identified high-volatility, "higher for longer" regimes. The increased cost of carry in a higher rate environment also necessitates more efficient use of capital and potentially lower leverage.

- 4. Strategy Activation/Deactivation: Completely turning off certain strategies that are known to perform poorly in specific regimes. For example, a highly leveraged carry trade might be deactivated if the model signals a high probability of a "higher for longer" regime with increasing funding costs and volatility.

Let's consider a simplified Python example of how one might use an HMM to identify regimes and then adjust a hypothetical equity momentum strategy. We'll use the hmmlearn library for the HMM.

1import numpy as np

2import pandas as pd

3from hmmlearn import hmm

4from sklearn.preprocessing import StandardScaler

5

6# --- 1. Simulate Data (In a real scenario, this would be actual market data) ---

7# Let's simulate 3 regimes:

8# Regime 0: Low Volatility, Low Return (e.g., "QE Era")

9# Regime 1: High Volatility, Medium Return (e.g., "Higher for Longer")

10# Regime 2: Medium Volatility, High Return (e.g., "Growth Boom")

11

12np.random.seed(42)

13n_samples = 1000

14n_features = 2 # e.g., daily equity returns, bond returns

15

16# Define HMM parameters for simulation

17startprob = np.array([0.3, 0.4, 0.3]) # Initial probabilities

18transmat = np.array([

19 [0.8, 0.1, 0.1], # Stay in 0, go to 1, go to 2

20 [0.1, 0.8, 0.1], # Stay in 1, go to 0, go to 2

21 [0.1, 0.1, 0.8] # Stay in 2, go to 0, go to 1

22])

23

24# Mean and covariance for each regime

25means = np.array([

26 [0.0001, 0.00005], # Regime 0: Low returns

27 [0.0003, -0.0001], # Regime 1: Higher equity return, negative bond return (inflationary)

28 [0.0005, 0.0002] # Regime 2: High returns

29])

30covars = np.array([

31 [[0.0001, 0.00001], [0.00001, 0.00005]], # Regime 0: Low volatility

32 [[0.0004, -0.00005], [-0.00005, 0.0002]], # Regime 1: Higher volatility, negative equity-bond correlation

33 [[0.0002, 0.00002], [0.00002, 0.0001]] # Regime 2: Medium volatility

34])

35

36# Generate sample data

37model_sim = hmm.GaussianHMM(n_components=3, covariance_type="full", n_iter=100)

38model_sim.startprob_ = startprob

39model_sim.transmat_ = transmat

40model_sim.means_ = means

41model_sim.covars_ = covars

42

43X_sim, Z_sim = model_sim.sample(n_samples)

44df_sim = pd.DataFrame(X_sim, columns=['Equity_Returns', 'Bond_Returns'])

45df_sim['True_Regime'] = Z_sim

46

47print("Simulated Data Head:")

48print(df_sim.head())

49

50# --- 2. Prepare Data for HMM Training ---

51# In a real scenario, X would be historical returns and possibly other macro features.

52# For simplicity, we'll use simulated returns.

53scaler = StandardScaler()

54X_scaled = scaler.fit_transform(df_sim[['Equity_Returns', 'Bond_Returns']])

55

56# --- 3. Train HMM Model ---

57n_components = 3 # Number of regimes to discover

58model_hmm = hmm.GaussianHMM(n_components=n_components, covariance_type="full", n_iter=100, random_state=42)

59model_hmm.fit(X_scaled)

60

61# --- 4. Predict Regimes ---

62# Use the Viterbi algorithm to find the most likely sequence of states

63hidden_states = model_hmm.predict(X_scaled)

64df_sim['Predicted_Regime'] = hidden_states

65

66print("\nHMM Model Parameters (Means for each regime, scaled):")

67print(model_hmm.means_)

68print("\nHMM Model Parameters (Covariances for each regime, scaled):")

69print(model_hmm.covars_)

70print("\nHMM Model Parameters (Transition Matrix):")

71print(model_hmm.transmat_)

72

73# --- 5. Implement Regime-Adaptive Strategy (Example: Simple Momentum) ---

74# Define momentum lookback and thresholds

75momentum_lookback = 20 # days

76momentum_threshold = 0.01 # 1% return over lookback

77

78# Calculate momentum signal (e.g., for equity)

79df_sim['Equity_Momentum'] = df_sim['Equity_Returns'].rolling(window=momentum_lookback).sum()

80

81# Define strategy weights based on regime

82# In a real scenario, these would be optimized or derived from backtesting.

83# Let's assume:

84# Regime 0 (Low Volatility/QE): Moderate momentum, some bond exposure

85# Regime 1 (Higher for Longer): Aggressive momentum (only if positive), no bonds, or short bonds

86# Regime 2 (Growth Boom): Aggressive momentum, some bond exposure

87regime_weights = {

88 0: {'equity_momentum_weight': 0.5, 'bond_weight': 0.2, 'momentum_multiplier': 1.0},

89 1: {'equity_momentum_weight': 0.8, 'bond_weight': -0.1, 'momentum_multiplier': 1.5}, # Short bonds in HFL

90 2: {'equity_momentum_weight': 0.7, 'bond_weight': 0.1, 'momentum_multiplier': 1.2}

91}

92

93df_sim['Strategy_Equity_Weight'] = 0.0

94df_sim['Strategy_Bond_Weight'] = 0.0

95df_sim['Strategy_Return'] = 0.0

96

97for i in range(n_samples):

98 current_regime = df_sim['Predicted_Regime'].iloc[i]

99

100 # Get regime-specific parameters

101 eq_mom_weight = regime_weights[current_regime]['equity_momentum_weight']

102 bond_weight = regime_weights[current_regime]['bond_weight']

103 mom_multiplier = regime_weights[current_regime]['momentum_multiplier']

104

105 # Apply momentum strategy for equity

106 if df_sim['Equity_Momentum'].iloc[i] > momentum_threshold:

107 df_sim['Strategy_Equity_Weight'].iloc[i] = eq_mom_weight * mom_multiplier

108 else:

109 df_sim['Strategy_Equity_Weight'].iloc[i] = 0.0 # No equity position if momentum is negative/flat

110

111 # Apply bond position

112 df_sim['Strategy_Bond_Weight'].iloc[i] = bond_weight

113

114 # Calculate daily strategy return (simplified, no transaction costs)

115 df_sim['Strategy_Return'].iloc[i] = (df_sim['Strategy_Equity_Weight'].iloc[i] * df_sim['Equity_Returns'].iloc[i] +

116 df_sim['Strategy_Bond_Weight'].iloc[i] * df_sim['Bond_Returns'].iloc[i])

117

118print("\nStrategy Performance (Cumulative Returns):")

119df_sim['Cumulative_Strategy_Return'] = (1 + df_sim['Strategy_Return']).cumprod()

120print(df_sim[['Cumulative_Strategy_Return', 'True_Regime', 'Predicted_Regime']].tail())

121

122# Example of how to interpret HMM output:

123# If model_hmm.means_[1] (for 'Regime 1') shows higher equity returns and lower/negative bond returns,

124# and higher equity volatility, that could be interpreted as the 'Higher for Longer' regime.

125# The strategy then adjusts weights accordingly.In this example, the HMM identifies different market states based on the statistical properties of equity and bond returns. The regime_weights dictionary then dictates how a simple momentum strategy adapts. In a real-world application, these weights would be derived from extensive backtesting and optimization, ensuring that the strategy's response to each identified regime is robust and profitable. This approach provides a dynamic, data-driven mechanism for algorithmic strategies to navigate the complexities of a "higher for longer" macro regime, moving beyond static assumptions to embrace the inherent non-stationarity of financial markets. Tools like QuantArtisan's Regime-Adaptive Portfolio framework can help automate such dynamic allocation strategies, leveraging techniques like Hidden Markov Models to identify and respond to changing market conditions.

Implementation Considerations for Quant Traders

Implementing regime-adaptive algorithmic strategies in a "higher for longer" environment presents several critical considerations for quant traders, extending beyond the theoretical and practical aspects discussed. These considerations span data quality, model robustness, computational demands, and the inherent challenges of real-world deployment.

Data Requirements and Quality: The efficacy of regime-adaptive models hinges critically on the quality and breadth of input data. Beyond standard market data (prices, volumes), incorporating macro-economic indicators, as suggested earlier, introduces new data acquisition and cleaning challenges. Sources for inflation expectations, central bank communications, and global economic growth metrics can be disparate and require careful harmonization. Furthermore, the "higher for longer" regime implies a shift in fundamental economic relationships, meaning that historical data from vastly different macro environments (e.g., the pre-GFC era or the quantitative easing period) might not be directly representative. Quant traders must critically evaluate the relevance of their historical training data, potentially employing techniques like "regime-aware" data windows or weighting recent data more heavily. Data latency and synchronization across diverse datasets also become paramount, as timely regime identification is crucial for effective strategy adaptation [3].

Model Robustness and Overfitting: While HMMs offer a powerful framework, they are not immune to overfitting, especially when the number of regimes or features is high relative to the available data. The "higher for longer" regime might be a novel combination of factors, making it challenging to find sufficiently long historical analogues. Therefore, rigorous out-of-sample testing, cross-validation, and robustness checks are essential. Quant traders must guard against models that merely memorize past regime transitions rather than capturing the underlying economic drivers. Techniques such as regularization, ensemble methods (e.g., combining multiple HMMs or other regime detection models), and Bayesian approaches (which naturally incorporate uncertainty) can enhance model robustness. Furthermore, the interpretation of identified regimes is critical; simply having distinct statistical properties is not enough – there must be a plausible economic narrative linking the regime to the observed market behavior, especially concerning persistent inflation and higher rates [4, 6].

Computational Costs and Real-time Processing: Regime-adaptive models, particularly those involving HMMs or more complex machine learning architectures, can be computationally intensive. Training these models on large datasets with many features and potential regimes requires significant processing power. More importantly, real-time or near real-time regime identification and subsequent strategy adjustments demand efficient inference algorithms and robust infrastructure. The latency between observing new market data, updating the regime probabilities, and issuing new trading signals must be minimized, especially for higher-frequency strategies. This necessitates optimized code, potentially GPU acceleration for certain machine learning models, and scalable cloud computing resources. The continuous monitoring of model performance and regime stability also adds to the computational burden, requiring automated pipelines for retraining and validation.

Dynamic Risk Management and Portfolio Construction: The "higher for longer" regime inherently implies greater uncertainty and potentially higher volatility. This necessitates a dynamic approach to risk management. Static risk limits or fixed position sizing will likely be inadequate. Instead, algorithmic models should incorporate regime-dependent risk budgeting, adjusting VaR/CVaR calculations, leverage levels, and stop-loss thresholds based on the identified regime's characteristics. For instance, in a "higher for longer" regime characterized by increased inflation and interest rate volatility, the model might automatically reduce exposure to long-duration assets and increase hedging activity. Furthermore, portfolio construction must also be regime-adaptive. The traditional diversification benefits of certain asset classes (e.g., equities and bonds) may break down or even reverse in specific regimes [1]. Quant traders must therefore continuously re-evaluate correlation structures and co-dependencies, dynamically adjusting asset allocations to maintain desired risk-return profiles. This requires a sophisticated understanding of how macro factors influence cross-asset relationships, moving beyond simple historical averages.

Monitoring and Adaptability: Finally, even the most sophisticated regime-adaptive model is not a "set it and forget it" solution. The macro environment is continuously evolving, and what constitutes "higher for longer" today might shift tomorrow. Continuous monitoring of model performance, regime stability, and the underlying economic indicators is paramount. Quant traders must establish clear criteria for when a model needs retraining, recalibration, or even a fundamental redesign. This includes monitoring the predictive power of the model's regime classifications, the profitability of the regime-specific strategies, and any shifts in the statistical properties within an identified regime that suggest a further sub-regime or an entirely new state. The ability to quickly adapt and retrain models in response to new information – such as unexpected central bank policy shifts or geopolitical events – is a defining characteristic of successful algorithmic trading in this challenging "higher for longer" era.

Key Takeaways

- ▸ Regime Shifts are Structural: The "higher for longer" environment is a fundamental macro regime shift, not a temporary market anomaly, altering the statistical properties of asset returns and demanding adaptive algorithmic strategies [2, 4, 6].

- ▸ Embrace Non-Stationarity: Algorithmic models must move beyond static assumptions and incorporate dynamic, regime-adaptive frameworks, such as Hidden Markov Models (HMMs), to account for changing market dynamics [3].

- ▸ Macro Data Integration is Critical: Successful regime identification requires incorporating relevant macro-economic indicators (e.g., inflation expectations, central bank sentiment, real interest rates) alongside traditional market data [1, 4].

- ▸ Dynamic Strategy Adjustment: Strategies must adapt their asset allocation, parameter tuning, and risk management based on the identified macro regime, potentially shifting exposure to assets favored in inflationary, high-rate environments [1, 5].

- ▸ Robustness and Overfitting: Rigorous out-of-sample testing, cross-validation, and techniques like regularization are crucial to ensure model robustness and prevent overfitting to past, potentially irrelevant, market conditions.

- ▸ Computational Efficiency is Key: Real-time regime identification and strategy execution demand efficient algorithms, optimized code, and scalable infrastructure to minimize latency and ensure timely responses to market changes.

- ▸ Continuous Monitoring and Recalibration: Even advanced models require continuous monitoring, performance validation, and periodic retraining to remain effective as the macro environment continues to evolve.

Applied Ideas

The frameworks discussed above are not merely academic exercises — they translate directly into deployable trading logic. Here are concrete next steps for practitioners:

- ▸Backtest first: Validate any regime-detection or signal-generation approach with walk-forward analysis before committing capital.

- ▸Start small: Deploy with fractional position sizing and paper-trade for at least one full market cycle.

- ▸Monitor regime shifts: Set automated alerts for when your model detects a regime change — manual review before large rebalances is prudent.

- ▸Iterate on KPIs: Track Sharpe, Sortino, max drawdown, and win rate weekly. If any metric degrades beyond your predefined threshold, pause and re-evaluate.

- ▸Combine signals: The strongest edges come from combining uncorrelated signals — pair the ideas in this post with your existing alpha sources.

Sources & Research

6 articles that informed this post

Navigating Persistent Inflation with Systematic Strategies: The 'NACHO' Trade and Delayed Fed Cuts

Read article

Algorithmic Strategies Confront Momentum-Driven Market Amidst 'Higher for Longer' Inflation

Read article

Navigating 2026 Macro Regimes: Algorithmic Strategies for Evolving Central Bank Policies & CTA Performance

Read article

Navigating 2026's 'Stagflation-Lite' Regime with Algorithmic Macro Strategies

Read article

Algorithmic Strategies Navigate Tech Pullback & 'Higher for Longer' Fed Stance on May 7th

Read article

Navigating 2026 Macro: Systematic Strategies for Persistent Inflation and 'Higher for Longer' Rates

Read articleFrom Theory to Practice

The concepts discussed in this article are exactly what we build into our products at QuantArtisan.

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# Set a random seed for reproducibility of synthetic data

np.random.seed(42)Found this useful? Share it with your network.