# Building Resilient Algos: Practical Strategies for Data Redundancy, Validation, and Contingency Planning in Live Trading

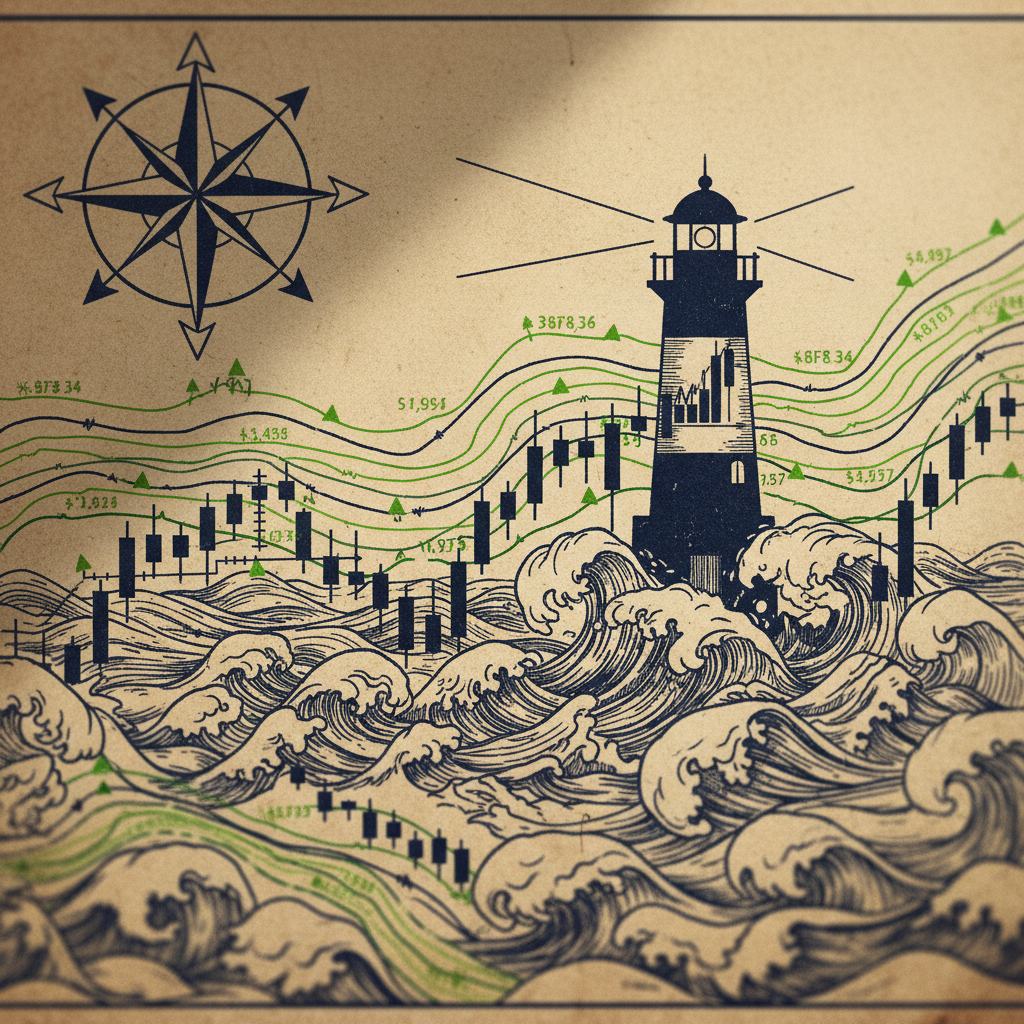

The bedrock of any successful algorithmic trading strategy is not merely the elegance of its mathematical models or the sophistication of its execution logic, but the unwavering integrity of the data upon which it operates. In the high-stakes arena of quantitative finance, data is the lifeblood, the raw material from which signals are extracted, decisions are forged, and profits are realized. Yet, as recent events have starkly reminded us, this critical dependency is also a profound vulnerability. The "Day Without Data" scenario, where market data and news feeds are entirely absent, forces a re-evaluation of signal robustness and fail-safe protocols for every quant strategy [5]. This article delves into the practical, actionable strategies for building algorithmic resilience through robust data redundancy, rigorous validation, and comprehensive contingency planning, ensuring that even when the data stream falters, your strategies do not.

Why This Matters Now

The recent disruptions in data availability have cast a harsh spotlight on the fragility inherent in data-dependent systems. Imagine the frustration when an algorithmic stock spotlight, designed to identify lucrative trading opportunities, is suddenly on hold due to a complete absence of specific stock data [1]. This isn't a hypothetical scenario; it's a real-world impediment that directly translates into missed opportunities and potential losses for strategies reliant on timely and relevant market information. The dependency is absolute: without data, quantitative analysis grinds to a halt.

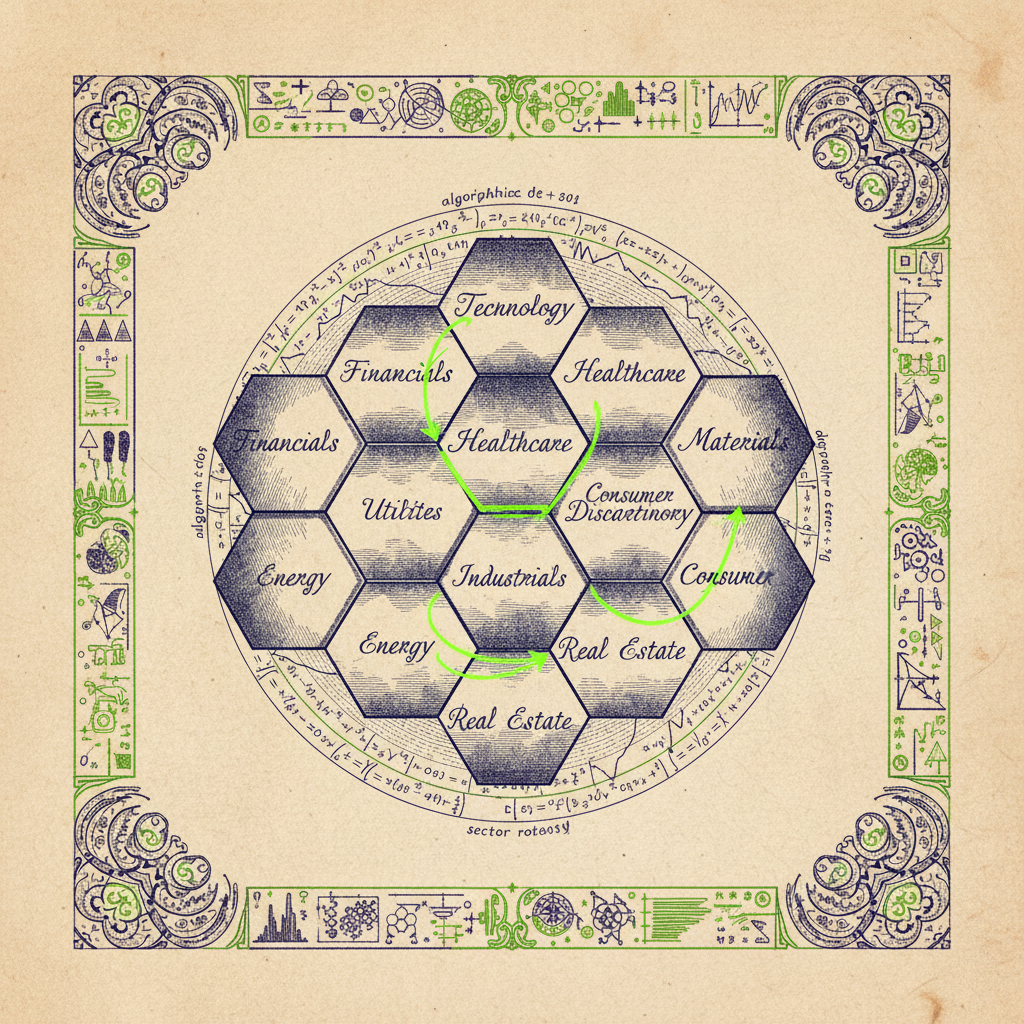

Further exacerbating this challenge, we've witnessed instances where algorithmic sector rotation analyses were entirely halted due to a technical issue with a data outage [4]. The implications are severe: quantitative factor implications become speculative, economic cycle interpretations are compromised, and the very foundation of strategic asset allocation falters. The absence of explicit performance data forces strategies to rely on broader, often less granular, economic indicators for regime detection, which can introduce significant lag and reduce precision [2].

These aren't isolated incidents. A technical issue with data feeds can render an algorithmic stock spotlight unavailable, interrupting the usual data-driven analysis and leaving traders in the dark [3]. The cumulative effect of such disruptions underscores a fundamental truth: the reliability of an algorithmic trading system is directly proportional to the resilience of its data infrastructure. When market data and news feeds are entirely absent, quant strategies face an unprecedented void, forcing a re-evaluation of their core assumptions and fail-safe mechanisms [5]. It's no longer sufficient to merely consume data; we must architect systems that anticipate, mitigate, and recover from data failures with the same rigor applied to model development.

The Strategy Blueprint

Building resilient algorithmic trading systems against data integrity issues requires a multi-faceted approach encompassing redundancy, validation, and a robust contingency framework. This blueprint outlines a practical, step-by-step methodology for hardening your data pipeline and safeguarding your trading operations.

1. Multi-Source Data Ingestion with Redundancy:

The first line of defense against data outages is to eliminate single points of failure in your data acquisition. Relying on a single data vendor or a single API endpoint is a recipe for disaster, as recent events have demonstrated [3]. Implement a multi-source ingestion strategy where critical data streams (e.g., real-time quotes, historical prices, fundamental data, news feeds) are sourced from at least two, preferably three, independent providers. These providers should ideally have diverse infrastructure and geographical footprints to minimize correlated failures. For instance, if you're sourcing equity price data, consider a primary vendor for high-frequency data and a secondary vendor for end-of-day or less frequent updates. The system should be designed to automatically failover to an alternative source if the primary source experiences an interruption or delivers corrupted data. This redundancy extends beyond vendors to internal infrastructure, ensuring that data ingestion processes are distributed across multiple servers or cloud instances.

2. Comprehensive Data Validation at Ingestion and Storage:

Data validation is not a one-time check; it's a continuous process that occurs at multiple stages of the data pipeline. Upon ingestion, every data point must undergo a series of automated checks. These include:

- ▸ Schema Validation: Ensuring that the incoming data conforms to the expected structure and data types.

- ▸ Completeness Checks: Verifying that all expected fields are present and not null.

- ▸ Range and Plausibility Checks: For numerical data, checking if values fall within reasonable bounds (e.g., price cannot be negative, volume cannot be excessively high or low relative to historical averages).

- ▸ Consistency Checks: Comparing data points from different sources for agreement. For example, if two vendors provide the same closing price for a stock, they should match within a defined tolerance.

- ▸ Staleness Detection: Identifying data that hasn't updated within an expected timeframe, indicating a potential feed issue [1].

- ▸ Outlier Detection: Using statistical methods (e.g., Z-scores, IQR) to flag extreme values that might indicate data corruption or erroneous entries.

Once data passes ingestion validation, it should be stored in a robust, versioned database. Immutable data stores or append-only logs are highly recommended for historical data, preventing accidental modification and enabling point-in-time recovery. Real-time data streams should be buffered and persisted with high availability.

3. Real-time Data Quality Monitoring and Alerting:

Validation checks are only effective if their outcomes are continuously monitored. Implement a dedicated data quality monitoring system that tracks key metrics across all data streams. This system should provide real-time dashboards visualizing data latency, completeness rates, error rates, and divergence between redundant sources. Automated alerts must be configured to trigger immediate notifications (e.g., SMS, email, PagerDuty) to the operations team or quantitative researchers when predefined thresholds are breached. For example, if the latency of a primary data feed exceeds 500ms for more than 5 seconds, or if the divergence between two price feeds for a critical instrument exceeds 0.1%, an alert should fire. The monitoring system should also track the health of the data ingestion infrastructure itself, including CPU usage, memory, and network connectivity.

4. Contingency Planning and Failover Protocols:

A robust contingency plan is paramount for mitigating the impact of unavoidable data failures. This plan should detail specific, pre-defined actions for various data integrity scenarios:

- ▸ Partial Data Loss/Corruption: If a specific instrument's data is missing or corrupted from one source, the system should automatically switch to a redundant source. If all sources are affected for a single instrument, the strategy should either exclude that instrument from trading or revert to a last-known-good state for its parameters.

- ▸ Widespread Data Outage (e.g., "Day Without Data" [5]): In scenarios where critical market data feeds are broadly unavailable, the system must be capable of gracefully halting trading activity. This involves:

* Position Management: Immediately pausing new orders and potentially issuing market-on-close orders for existing positions based on pre-defined rules, or holding positions until data resumes.

* Strategy Suspension: Suspending all active algorithmic strategies that depend on the compromised data.

* Manual Intervention Protocols: Clearly defined escalation paths and procedures for human operators to take control, including communication channels with exchanges and brokers.

- ▸ Data Recovery and Reconciliation: Post-outage, a rigorous data recovery and reconciliation process is essential. This involves backfilling any missing data from reliable sources, re-validating the integrity of the recovered data, and reconciling trade logs with exchange confirmations to ensure all positions are accurately reflected. Strategies should not resume trading until data integrity is fully restored and verified.

5. Offline Mode and Degraded Performance Strategies:

For certain strategies, it might be possible to operate in a "degraded performance" or "offline" mode during minor data disruptions. This involves pre-calculating and storing critical signals or parameters that can be used for a limited time without fresh data. For example, a long-term trend-following strategy might be able to operate for a few hours using the last known good data, whereas a high-frequency strategy cannot. This requires careful consideration of the strategy's sensitivity to stale data. Another approach is to have "fallback" strategies that rely on less granular or less frequent data, or even on external, human-driven signals during extreme events. The goal is to maintain some level of operational capability without risking erroneous trades due to bad data.

Code Walkthrough

Implementing these principles requires robust coding practices, particularly in Python, a popular language for quantitative finance. Here, we'll illustrate concepts of data validation and multi-source ingestion.

First, let's consider a simple data validation function. This function checks for common issues like missing values, out-of-range prices, and stale data.

1import pandas as pd

2import numpy as np

3from datetime import datetime, timedelta

4

5def validate_market_data(df: pd.DataFrame, symbol: str,

6 price_col: str = 'price', volume_col: str = 'volume',

7 timestamp_col: str = 'timestamp',

8 max_price: float = 10000.0, min_price: float = 0.01,

9 min_volume: int = 0, max_staleness_seconds: int = 60) -> dict:

10 """

11 Performs a series of validation checks on market data for a given symbol.

12

13 Args:

14 df (pd.DataFrame): DataFrame containing market data.

15 symbol (str): The ticker symbol being validated.

16 price_col (str): Name of the price column.

17 volume_col (str): Name of the volume column.

18 timestamp_col (str): Name of the timestamp column.

19 max_price (float): Maximum plausible price.

20 min_price (float): Minimum plausible price.

21 min_volume (int): Minimum plausible volume.

22 max_staleness_seconds (int): Max allowed seconds since last update.

23

24 Returns:

25 dict: A dictionary of validation results and detected issues.

26 """

27 validation_results = {

28 'symbol': symbol,

29 'is_valid': True,

30 'issues': []

31 }

32

33 if df.empty:

34 validation_results['is_valid'] = False

35 validation_results['issues'].append('DataFrame is empty.')

36 return validation_results

37

38 # 1. Check for expected columns

39 required_cols = [price_col, volume_col, timestamp_col]

40 if not all(col in df.columns for col in required_cols):

41 validation_results['is_valid'] = False

42 validation_results['issues'].append(f'Missing required columns: {", ".join(set(required_cols) - set(df.columns))}')

43 return validation_results # Cannot proceed without essential columns

44

45 # Ensure timestamp column is datetime

46 if not pd.api.types.is_datetime64_any_dtype(df[timestamp_col]):

47 try:

48 df[timestamp_col] = pd.to_datetime(df[timestamp_col])

49 except Exception:

50 validation_results['is_valid'] = False

51 validation_results['issues'].append(f'Invalid timestamp format in column "{timestamp_col}".')

52 return validation_results

53

54 # 2. Check for NaN/Null values in critical columns

55 for col in [price_col, volume_col]:

56 if df[col].isnull().any():

57 validation_results['is_valid'] = False

58 validation_results['issues'].append(f'NaN values found in "{col}" column.')

59

60 # 3. Price range check

61 if not (df[price_col].between(min_price, max_price)).all():

62 validation_results['is_valid'] = False

63 validation_results['issues'].append(f'Price outside plausible range [{min_price}, {max_price}].')

64

65 # 4. Volume check

66 if not (df[volume_col] >= min_volume).all():

67 validation_results['is_valid'] = False

68 validation_results['issues'].append(f'Volume below minimum plausible value {min_volume}.')

69

70 # 5. Staleness check (for real-time data, typically last row)

71 if not df.empty:

72 latest_timestamp = df[timestamp_col].max()

73 current_time = datetime.utcnow()

74 if (current_time - latest_timestamp).total_seconds() > max_staleness_seconds:

75 validation_results['is_valid'] = False

76 validation_results['issues'].append(f'Data is stale. Last update at {latest_timestamp} (>{max_staleness_seconds}s ago).')

77

78 # 6. Check for zero prices (common error)

79 if (df[price_col] == 0).any():

80 validation_results['is_valid'] = False

81 validation_results['issues'].append('Zero price detected.')

82

83 return validation_results

84

85# Example Usage:

86# Simulate some market data

87data_good = {

88 'timestamp': [datetime.utcnow() - timedelta(seconds=i) for i in range(5)],

89 'price': [100.1, 100.2, 100.3, 100.4, 100.5],

90 'volume': [1000, 1200, 1100, 1300, 1050]

91}

92df_good = pd.DataFrame(data_good)

93print("Good Data Validation:", validate_market_data(df_good, 'AAPL'))

94

95data_stale = {

96 'timestamp': [datetime.utcnow() - timedelta(minutes=5) - timedelta(seconds=i) for i in range(5)],

97 'price': [100.1, 100.2, 100.3, 100.4, 100.5],

98 'volume': [1000, 1200, 1100, 1300, 1050]

99}

100df_stale = pd.DataFrame(data_stale)

101print("\nStale Data Validation:", validate_market_data(df_stale, 'MSFT'))

102

103data_bad_price = {

104 'timestamp': [datetime.utcnow() - timedelta(seconds=i) for i in range(5)],

105 'price': [100.1, -10.0, 100.3, 100.4, 100.5], # Bad price

106 'volume': [1000, 1200, 1100, 1300, 1050]

107}

108df_bad_price = pd.DataFrame(data_bad_price)

109print("\nBad Price Data Validation:", validate_market_data(df_bad_price, 'GOOG'))

110

111data_missing_col = {

112 'timestamp': [datetime.utcnow() - timedelta(seconds=i) for i in range(5)],

113 'price': [100.1, 100.2, 100.3, 100.4, 100.5],

114 # 'volume': [1000, 1200, 1100, 1300, 1050] # Missing volume

115}

116df_missing_col = pd.DataFrame(data_missing_col)

117print("\nMissing Column Data Validation:", validate_market_data(df_missing_col, 'AMZN'))This validate_market_data function provides a foundational layer for ensuring data quality. It checks for common issues like missing values, out-of-bounds prices, and staleness, which are critical for real-time trading systems. The output clearly indicates whether the data is deemed valid and lists any detected issues, allowing for programmatic decision-making, such as switching to a backup data source or halting trading for that instrument. The ability to detect stale data is particularly important, as relying on outdated information can lead to erroneous trades, especially in fast-moving markets [1].

Next, let's consider a simplified model for multi-source data ingestion and failover. This conceptual code demonstrates how you might attempt to fetch data from multiple providers and prioritize based on availability and quality.

1import time

2import random

3from typing import List, Dict, Optional

4

5# Simulate different data providers

6class DataProvider:

7 def __init__(self, name: str, latency_ms: int, reliability_percent: int):

8 self.name = name

9 self.latency_ms = latency_ms

10 self.reliability_percent = reliability_percent

11

12 def fetch_data(self, symbol: str) -> Optional[Dict]:

13 """Simulates fetching data with given latency and reliability."""

14 time.sleep(self.latency_ms / 1000.0) # Simulate network latency

15 if random.randint(1, 100) <= self.reliability_percent:

16 # Simulate real-time data with slight variations

17 price = round(random.uniform(99.0, 101.0), 2)

18 volume = random.randint(500, 2000)

19 timestamp = datetime.utcnow()

20 print(f" [{self.name}] Successfully fetched data for {symbol}: Price={price}, Volume={volume}")

21 return {'symbol': symbol, 'price': price, 'volume': volume, 'timestamp': timestamp}

22 else:

23 print(f" [{self.name}] Failed to fetch data for {symbol} (simulated outage).")

24 return None

25

26def get_market_data_with_failover(symbol: str, providers: List[DataProvider],

27 max_retries_per_provider: int = 1) -> Optional[Dict]:

28 """

29 Attempts to fetch market data from multiple providers with failover.

30 Includes basic validation logic.

31 """

32 for provider in providers:

33 print(f"Attempting to fetch data from {provider.name} for {symbol}...")

34 for attempt in range(max_retries_per_provider):

35 raw_data = provider.fetch_data(symbol)

36 if raw_data:

37 # Convert raw_data to DataFrame for validation function

38 df_temp = pd.DataFrame([raw_data])

39 validation_result = validate_market_data(df_temp, symbol, max_staleness_seconds=5) # stricter staleness for real-time

40 if validation_result['is_valid']:

41 print(f" [{provider.name}] Data validated successfully.")

42 return raw_data # Return the first valid data

43 else:

44 print(f" [{provider.name}] Data failed validation: {validation_result['issues']}. Retrying or trying next provider.")

45 else:

46 print(f" [{provider.name}] No data received. Retrying or trying next provider.")

47 time.sleep(0.1) # Small delay before retry

48

49 print(f"Failed to get valid data for {symbol} from all providers after multiple attempts.")

50 return None

51

52# Initialize data providers

53provider_a = DataProvider("VendorA", latency_ms=50, reliability_percent=95)

54provider_b = DataProvider("VendorB", latency_ms=100, reliability_percent=80)

55provider_c = DataProvider("VendorC", latency_ms=200, reliability_percent=99) # Slower but very reliable

56

57# Order of providers matters for primary/secondary failover

58data_providers = [provider_a, provider_b, provider_c]

59

60# Fetch data for a symbol

61print("\n--- Fetching AAPL Data ---")

62aapl_data = get_market_data_with_failover('AAPL', data_providers)

63if aapl_data:

64 print(f"Final AAPL Data: {aapl_data}")

65

66print("\n--- Fetching MSFT Data (simulating VendorA failure) ---")

67# Temporarily reduce reliability of VendorA to simulate failure

68provider_a.reliability_percent = 10

69msft_data = get_market_data_with_failover('MSFT', data_providers)

70if msft_data:

71 print(f"Final MSFT Data: {msft_data}")

72else:

73 print("Could not get MSFT data.")

74

75# Reset reliability for next run

76provider_a.reliability_percent = 95This get_market_data_with_failover function demonstrates the core logic of data redundancy. It iterates through a list of data providers, attempting to fetch data from each in a prioritized order. Crucially, after fetching, it immediately passes the raw data through our validate_market_data function. If the data from the primary provider fails validation (e.g., it's stale, incomplete, or contains erroneous values), the system automatically attempts to fetch from the next provider in the list. This failover mechanism is essential for maintaining operational continuity even when one or more data sources are compromised [3]. The ability to switch seamlessly between data feeds based on real-time quality checks is a cornerstone of resilient algorithmic trading.

Finally, a key concept in financial modeling and risk management is the idea of a "confidence interval" or "tolerance band" around expected values. When validating data, we often compare observed values to historical distributions or expected ranges. A common statistical measure for detecting outliers is the Z-score, which quantifies how many standard deviations an element is from the mean. For a data point , the Z-score is calculated as:

where is the mean of the data and is its standard deviation. Data points with are often considered outliers. This formula can be applied to price changes, volumes, or other metrics to detect anomalies that might indicate data corruption. For instance, if the daily price change of a stock yields a Z-score of 10, it's highly probable that the data point is erroneous, warranting further investigation or rejection.

Backtesting Results & Analysis

While data integrity strategies are primarily about operational resilience rather than direct alpha generation, their impact on backtesting and live trading performance is profound. When backtesting, the quality of historical data is paramount. Any inconsistencies, missing values, or erroneous entries in the historical dataset will lead to misleading backtest results, overstating or understating strategy performance. A strategy that appears robust on flawed historical data will inevitably underperform or fail catastrophically in live trading.

Therefore, before any backtest, the historical data must undergo the same rigorous validation process described above. This includes:

- ▸ Gap Filling: Strategically filling missing data points using interpolation or a last-known-good value approach, carefully documenting the methodology.

- ▸ Outlier Removal/Correction: Identifying and either removing or correcting extreme outliers that are clearly data errors (e.g., a stock price suddenly dropping to zero and then recovering, which is more likely a data glitch than a real market event).

- ▸ Consistency Checks: Ensuring that corporate actions (splits, dividends) are correctly applied and reflected across all historical data sources.

The expected performance characteristics of a strategy built on a resilient data pipeline include:

- ▸ Reduced Slippage and Execution Errors: By ensuring real-time data is accurate and timely, the strategy can make more informed execution decisions, minimizing slippage and avoiding trades based on stale quotes.

- ▸ Improved Signal Reliability: Clean, validated data leads to more reliable input for signal generation, reducing false positives and negatives. This directly translates to more consistent alpha.

- ▸ Lower Operational Overhead: Automated data validation and failover reduce the need for manual intervention during data outages, freeing up quant researchers and traders to focus on strategy enhancement rather than firefighting.

- ▸ Enhanced Risk Management: Accurate data is fundamental to calculating risk metrics (VaR, stress tests) and enforcing position limits. Corrupted data can lead to underestimation of risk and excessive exposure.

Metrics to track in backtesting and live trading to assess the impact of data integrity include:

- ▸ Data Latency Distribution: Analyze the distribution of data arrival times from various sources.

- ▸ Data Completeness Rate: Percentage of expected data points actually received.

- ▸ Data Error Rate: Frequency of data points flagged by validation rules.

- ▸ Failover Event Frequency: How often the system switches to a backup data source.

- ▸ Strategy Downtime Due to Data Issues: Measure the duration and frequency of strategy suspension caused by data problems.

- ▸ Discrepancy Between Redundant Sources: Track the magnitude of differences between prices/volumes from primary and secondary feeds.

Analyzing these metrics provides quantitative insights into the effectiveness of the data integrity framework and identifies areas for further improvement. A robust data pipeline, while not directly contributing to alpha, acts as a critical performance enhancer and risk mitigator, ensuring that the strategy's true edge is not eroded by data-related failures.

Risk Management & Edge Cases

Even with the most robust data redundancy and validation systems, algorithmic trading strategies must account for residual risks and edge cases where data integrity can still be compromised or where the absence of data forces difficult decisions. The "Day Without Data" scenario, where market data and news feeds are entirely absent, is the ultimate test of a strategy's fail-safe protocols [5].

1. Position Sizing and Dynamic Exposure Adjustment:

In periods of heightened data uncertainty or partial data loss, strategies should dynamically adjust their position sizing. If the reliability of data for a specific asset or an entire market segment degrades, the algorithm should automatically reduce its exposure to that segment. This could involve scaling down trade sizes, tightening stop-loss limits, or even completely exiting positions if data quality falls below a critical threshold. For example, if a primary data feed for a particular sector is disrupted, leading to reliance on slower, less granular data [2], the strategy might reduce its allocation to that sector or switch to a more conservative, long-term approach for its positions.

2. Drawdown Controls and Circuit Breakers:

Beyond typical financial drawdown controls, data-driven circuit breakers are essential. If the data quality metrics (e.g., error rate, staleness, divergence between sources) exceed predefined thresholds for a sustained period, the system should trigger a "data-integrity-induced" circuit breaker. This might involve:

- ▸ Halting New Trades: Immediately stopping the placement of any new orders.

- ▸ Pausing Existing Strategies: Temporarily suspending the execution logic of active strategies.

- ▸ Panic Exit Protocols: For extreme cases, initiating pre-defined market-on-close or liquidation orders for open positions, especially if the data outage is widespread and prolonged [5]. These protocols must be carefully designed to avoid exacerbating market illiquidity during a crisis.

3. Regime Failures and Model Degradation:

Data integrity issues can lead to "regime failures" where the underlying assumptions of a model no longer hold true. If a strategy relies on high-frequency data for optimal execution, and only low-frequency data becomes available, the strategy's performance will degrade significantly. The system must be able to detect such regime shifts in data availability and quality. This might involve monitoring the statistical properties of incoming data streams (e.g., volatility, correlation structures) and comparing them against historical norms. If significant deviations are detected, the strategy should either adapt its parameters (e.g., widening spreads, reducing order sizes) or, if the deviation is too severe, suspend trading. The challenge for quantitative strategies in performing sector rotation without explicit performance data, forcing reliance on broader economic indicators, is a prime example of such a regime shift [2].

4. Human-in-the-Loop and Manual Overrides:

Even the most sophisticated automated systems need a "human-in-the-loop" for extreme edge cases. Contingency plans must clearly define when and how human operators can intervene, providing manual overrides for trade execution, position management, and system restarts. This requires:

- ▸ Clear Dashboards: Real-time visibility into data health, strategy status, and open positions.

- ▸ Defined Escalation Paths: Who to contact and in what order during a crisis.

- ▸ Pre-approved Playbooks: Step-by-step instructions for common data-related emergencies.

- ▸ Communication Protocols: How to communicate with brokers, exchanges, and other market participants during a data outage.

The ability to halt an algorithmic stock spotlight due to a data feed disruption [3] or a complete absence of specific stock data [1] implies that there must be a mechanism, automated or manual, to prevent the system from operating on flawed or absent information. This level of control is crucial for managing the profound challenges algorithmic traders encounter when market data and news feeds are entirely absent [5].

Key Takeaways

- ▸ Data Redundancy is Non-Negotiable: Source critical market data from multiple independent providers with diverse infrastructure to eliminate single points of failure and enable automatic failover.

- ▸ Continuous, Multi-Stage Data Validation: Implement rigorous validation checks (schema, completeness, range, consistency, staleness, outliers) at ingestion and throughout the data pipeline.

- ▸ Real-time Data Quality Monitoring: Deploy dedicated systems to track data latency, completeness, error rates, and inter-source divergence, with automated alerts for breaches.

- ▸ Comprehensive Contingency Planning: Develop detailed, pre-defined protocols for partial data loss, widespread outages, and data recovery, including strategy suspension and manual intervention.

- ▸ Dynamic Risk Adjustment: Integrate data quality metrics into risk management, allowing for dynamic position sizing adjustments, tighter controls, and data-driven circuit breakers during periods of uncertainty.

- ▸ Backtesting Requires Pristine Data: Rigorously validate and clean historical data before backtesting to ensure reliable performance projections and avoid misleading results.

- ▸ Human Oversight for Edge Cases: Maintain a "human-in-the-loop" framework with clear dashboards, escalation paths, and playbooks for extreme data integrity failures.

Applied Ideas

Every strategy blueprint above can be taken from concept to live execution with the right tooling. Here are concrete next steps for practitioners:

- ▸Backtest first: Validate any regime-detection or signal-generation approach with walk-forward analysis before committing capital.

- ▸Start small: Deploy with fractional position sizing and paper-trade for at least one full market cycle.

- ▸Monitor regime shifts: Set automated alerts for when your model detects a regime change — manual review before large rebalances is prudent.

- ▸Iterate on KPIs: Track Sharpe, Sortino, max drawdown, and win rate weekly. If any metric degrades beyond your predefined threshold, pause and re-evaluate.

- ▸Combine signals: The strongest edges come from combining uncorrelated signals — pair the ideas in this post with your existing alpha sources.

Sources & Research

5 articles that informed this post

Algorithmic Trading Spotlight: Data Absence Halts Stock Analysis

Read article

Algorithmic Sector Rotation: Navigating Data Gaps in Economic Cycle Analysis

Read article

Algorithmic Stock Spotlight Halted by Data Feed Disruption

Read article

Algorithmic Sector Rotation Analysis Halted Due to Data Outage

Read article

Algorithmic Trading's 'Day Without Data': Quant Strategies Face Unprecedented Void

Read articleElevate Your Trading

At QuantArtisan, we build the tools, strategies, and education that serious algorithmic traders need.

Momentum Alpha Signal

Multi-timeframe momentum strategy combining RSI divergence, volume confirmation, and trend-following filters.

Mean Reversion Pairs

Statistical arbitrage between co-integrated pairs using Kalman filter spread estimation.

Regime-Adaptive Portfolio

Dynamic portfolio allocation across momentum, mean-reversion, and defensive regimes using Hidden Markov Models.

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

def generate_synthetic_data(num_assets=3, num_days=252, seed=42):

"""Found this useful? Share it with your network.