Beyond the Headlines: An Algorithmic Framework for Interpreting Earnings and Macro Regime Shifts

The relentless pace of modern financial markets demands more than just a superficial glance at headlines. For the sophisticated algorithmic trader, earnings season and macro regime shifts are not merely events but complex data streams, rich with signals that, when properly interpreted, can unlock significant alpha. As we navigate the dynamic landscape of 2026, characterized by persistent inflation and elevated interest rates [6], alongside significant sector rotations [1, 3, 7], the ability to algorithmically distill actionable insights from this torrent of information has become paramount. This article lays out a robust framework for quantitative interpretation, moving beyond anecdotal observations to systematic, adaptive strategies that thrive amidst market volatility and evolving economic conditions.

The Current Landscape

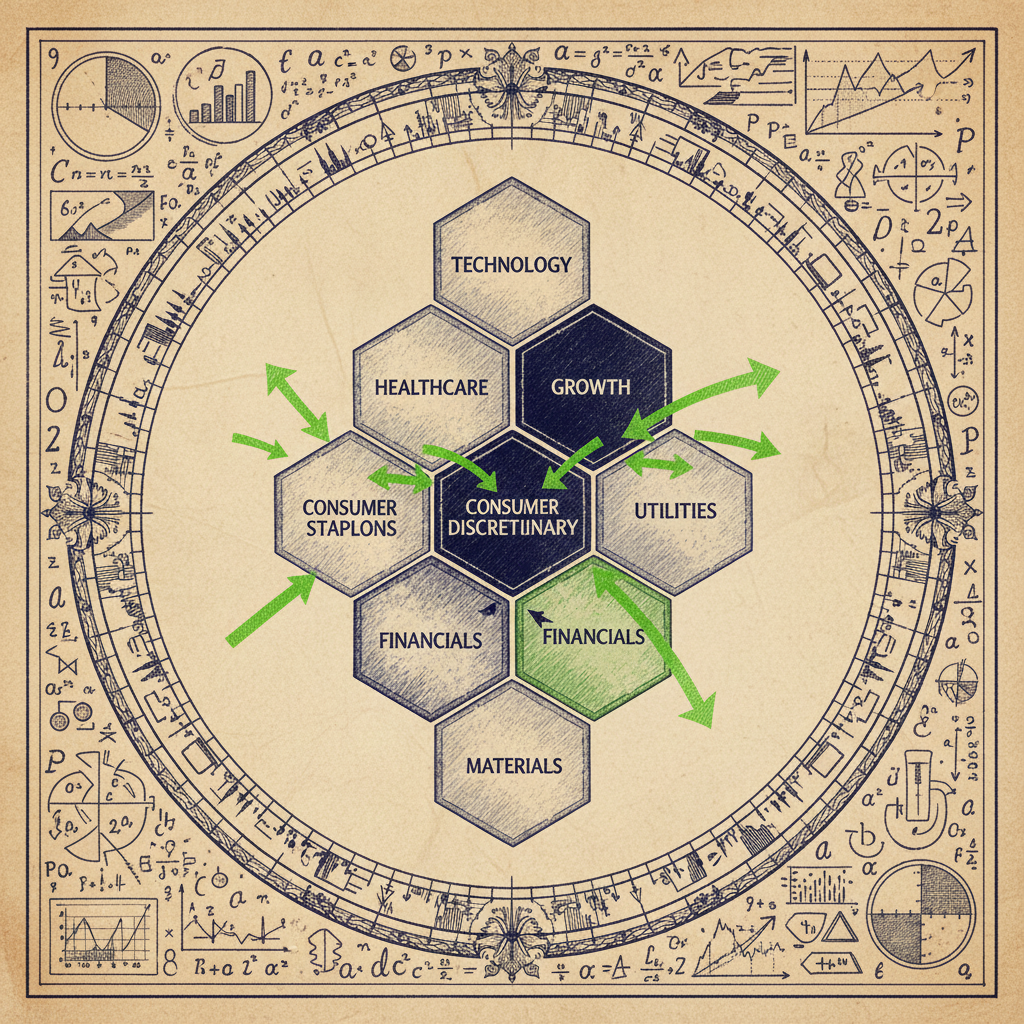

The year 2026 presents a fascinating, albeit challenging, environment for algorithmic traders. Recent analyses highlight a distinct macro regime where growth sectors, particularly healthcare, financials, and technology, are exhibiting strong performance, creating divergences that algorithmic strategies are actively targeting [1]. This isn't a uniform market ascent; rather, it’s a nuanced landscape where sector leadership is shifting, and traditional defensive plays are lagging [7]. The Q1/Q4 2026 earnings reports have been particularly illuminating, revealing a strong sector rotation with Financials and Healthcare leading the charge, while Utilities have lagged significantly [3]. This dynamic environment underscores the critical need for adaptive strategies that can identify and capitalize on these shifts in real-time.

The influx of Q1/Q4 2026 earnings data from diverse global firms provides a fertile ground for algorithmic traders, fueling event-driven models designed to detect market reactions and generate alpha opportunities [4]. For instance, an algorithmic lens applied to Galiano Gold's Q1 2026 earnings report would be crucial for systematic traders employing event-driven strategies in the precious metals sector, allowing them to systematically process and react to specific company-level disclosures [2]. This granular, event-driven approach, when combined with a broader macro perspective, forms a powerful synergy. The challenge lies not just in processing individual earnings reports, but in understanding how these micro-level events aggregate and interact with prevailing macro-economic conditions, such as the persistent inflation and elevated interest rates that continue to influence systematic strategies like CTAs and risk-parity [6].

Furthermore, the market's response to these earnings and macro shifts is not always straightforward. Sometimes, explicit real-time sector performance data can be scarce, necessitating algorithmic sector rotation strategies that rely on robust economic regime detection and predictive modeling [5]. This emphasizes that a purely reactive approach is insufficient; successful algorithmic trading in this environment requires a proactive framework that can anticipate and adapt to changes in market leadership and underlying economic cycles. The confluence of strong sector rotation, the impact of elevated rates, and the continuous flow of earnings data mandates a sophisticated, multi-layered algorithmic approach that can interpret both the micro and macro signals effectively.

Theoretical Foundation

| Feature | Efficient Market Hypothesis (EMH) | Adaptive Market Hypothesis (AMH) |

|---|---|---|

| Market Efficiency | Constant & always efficient | Varies over time; influenced by environment |

| Market Participants | Rational, profit-maximizing | Bounded rationality, learn & adapt |

| Arbitrage Opportunities | Rare, quickly eliminated | Exist periodically, exploited by adaptive agents |

| Information Reflection | Instantaneous & full | Gradual, depends on market conditions & adaptation |

| Implication for Algos | Difficult to consistently beat market | Alpha opportunities in adapting to changing regimes |

At the heart of interpreting earnings and macro regime shifts algorithmically lies the concept of adaptive market hypothesis and regime detection. Unlike the efficient market hypothesis, which posits that all information is immediately reflected in prices, the adaptive market hypothesis suggests that market efficiency is not constant but varies over time, influenced by environmental conditions and the adaptive behavior of market participants. This theoretical underpinning justifies the pursuit of strategies that actively seek to identify and exploit temporary inefficiencies or predictable patterns that emerge during specific market regimes, such as earnings season or periods of significant macro shifts.

Our framework begins with the premise that market behavior, particularly around earnings announcements, is not random but follows discernible patterns influenced by information asymmetry, behavioral biases, and the market's collective interpretation of new data. For macro shifts, the market transitions between distinct states—e.g., growth, recession, inflation, deflation—each characterized by specific asset class correlations, volatilities, and return profiles. Identifying these regimes is crucial for tailoring algorithmic responses. Hidden Markov Models (HMMs) are particularly well-suited for this task, as they can model a system with unobservable (hidden) states that generate observable sequences of data. In our context, the hidden states could be "growth regime," "inflationary regime," or "earnings-driven volatility regime," while the observable data could be sector returns, volatility indices, interest rate changes, or earnings surprise magnitudes.

Let's consider a basic HMM for regime detection. We assume there are hidden states, . At each time step , the system is in one of these states, . The state transitions are governed by a transition probability matrix , where . The observations, , are generated from the current state according to an emission probability distribution , where . The initial state probabilities are given by , where .

The core problems addressed by HMMs are:

- 1. Evaluation: Given the model parameters and an observation sequence , compute . This helps in scoring how well a model fits a given sequence.

- 2. Decoding: Given and , find the most likely sequence of hidden states that produced . This is crucial for identifying the current market regime. The Viterbi algorithm is commonly used for this.

- 3. Learning: Given , estimate the model parameters . The Baum-Welch algorithm (an expectation-maximization algorithm) is used for this.

For example, to detect macro regimes, we might use observable data such as GDP growth rates, inflation rates, interest rate movements [6], and sector performance [1, 3, 7]. The hidden states could represent "High Growth/Low Inflation," "Stagflation," "Recession," etc. The Viterbi algorithm would then allow us to infer the most probable sequence of these economic regimes given the historical data. This approach is fundamental to adaptive strategies, as it allows for dynamic adjustment of portfolio allocations, risk parameters, and trading signals based on the inferred regime. Tools like Regime-Adaptive Portfolio, which dynamically allocates across momentum, mean-reversion, and defensive regimes using Hidden Markov Models, exemplify this theoretical application.

Beyond regime detection, the algorithmic interpretation of earnings involves natural language processing (NLP) and sentiment analysis. Earnings call transcripts, management guidance, and analyst reports contain rich textual data that can reveal nuances beyond the reported numbers. For instance, the tone of management's discussion about future outlook or the emphasis on certain operational metrics can be indicative of underlying business health or future performance. Machine learning models, trained on historical earnings data and subsequent market reactions, can learn to identify patterns in these textual features that correlate with price movements, volatility spikes, or sector rotation [4]. This allows quants to move "beyond the headlines" and extract deeper, less obvious signals.

How It Works in Practice

Translating this theoretical framework into practical algorithmic strategies involves several steps, from data acquisition and feature engineering to model training and signal generation. The core idea is to build adaptive systems that can dynamically adjust their trading logic based on the identified macro regime and the granular insights derived from earnings events.

First, data acquisition and pre-processing are crucial. For earnings, this includes structured data like revenue, EPS, guidance, and analyst estimates, alongside unstructured data like earnings call transcripts, news articles, and social media sentiment. For macro regimes, time series data such as interest rates, inflation indicators, GDP growth, unemployment rates, and sector-specific performance metrics (e.g., healthcare sector returns, financial sector returns [1, 3]) are essential. Data cleaning, normalization, and outlier detection are standard but critical steps.

Second, feature engineering transforms raw data into meaningful inputs for our models. For earnings, features might include earnings surprise (actual EPS vs. consensus), revenue surprise, guidance changes, and various NLP-derived metrics from transcripts (e.g., sentiment scores, topic frequencies, keyword presence related to specific risks or opportunities). For macro regimes, features could be moving averages of economic indicators, volatility measures, term spread, or inter-market correlations. For example, the relative performance of Tech & Growth sectors versus defensive plays [7] could be a key feature for identifying a growth-oriented regime.

Third, regime detection models, such as the HMM described earlier, are trained on historical macro features to identify distinct market states. Once these regimes are identified, specific algorithmic strategies can be associated with each. For instance, a "growth regime" might favor momentum strategies in technology and healthcare [1, 7], while an "inflationary regime" might lean towards value stocks or commodities, and an "elevated rates" regime might necessitate adjustments to risk-parity or CTA strategies [6].

Here's a simplified Python example demonstrating how one might use a Gaussian HMM for regime detection on a synthetic dataset, which could represent a simplified market environment. In a real-world scenario, the observations would be derived from actual financial data like sector returns, volatility, or interest rate changes.

1import numpy as np

2from hmmlearn import hmm

3import matplotlib.pyplot as plt

4import pandas as pd

5

6# --- 1. Simulate Data for Demonstration ---

7# In a real scenario, this would be actual financial time series data

8# e.g., daily returns of different sectors, volatility indices, interest rates.

9# Let's simulate two regimes: low volatility/high return and high volatility/low return.

10

11np.random.seed(42)

12n_samples = 500

13n_features = 2 # e.g., daily return and daily volatility

14

15# Regime 0: Low volatility, moderate positive return

16mean0 = np.array([0.0005, 0.008]) # e.g., 0.05% daily return, 0.8% daily vol

17cov0 = np.array([[0.000001, 0.0000001],

18 [0.0000001, 0.000004]])

19

20# Regime 1: High volatility, slightly negative return

21mean1 = np.array([-0.0002, 0.015]) # e.g., -0.02% daily return, 1.5% daily vol

22cov1 = np.array([[0.000004, 0.0000005],

23 [0.0000005, 0.000009]])

24

25# Simulate states and observations

26states = np.zeros(n_samples, dtype=int)

27observations = np.zeros((n_samples, n_features))

28

29# Define transition probabilities (e.g., 90% chance to stay in current state)

30# This is a simplified example, real HMMs learn these.

31transition_matrix = np.array([[0.9, 0.1],

32 [0.1, 0.9]])

33

34current_state = 0 # Start in regime 0

35for i in range(n_samples):

36 if current_state == 0:

37 observations[i] = np.random.multivariate_normal(mean0, cov0)

38 else:

39 observations[i] = np.random.multivariate_normal(mean1, cov1)

40

41 # Decide next state based on transition matrix

42 current_state = np.random.choice([0, 1], p=transition_matrix[current_state])

43 states[i] = current_state # Store the simulated true state

44

45# --- 2. Train an HMM for Regime Detection ---

46# We'll assume 2 hidden states (as we simulated 2)

47n_components = 2

48model = hmm.GaussianHMM(n_components=n_components, covariance_type="full", n_iter=100, random_state=42)

49

50# Fit the model to the observations

51# In a real scenario, you'd use a training set and validate on a test set.

52model.fit(observations)

53

54# Predict the most likely sequence of states

55hidden_states = model.predict(observations)

56

57# --- 3. Visualize Results ---

58plt.figure(figsize=(15, 7))

59

60# Plot observations

61plt.subplot(2, 1, 1)

62plt.plot(observations[:, 0], label='Feature 1 (e.g., Daily Return)')

63plt.plot(observations[:, 1], label='Feature 2 (e.g., Daily Volatility)')

64plt.title('Simulated Financial Observations')

65plt.legend()

66plt.grid(True)

67

68# Plot predicted hidden states

69plt.subplot(2, 1, 2)

70plt.plot(hidden_states, drawstyle='steps-post', label='Predicted Hidden State')

71plt.plot(states, drawstyle='steps-post', label='True Hidden State (Simulated)', linestyle='--', alpha=0.7)

72plt.yticks([0, 1], ['Regime 0', 'Regime 1'])

73plt.title('Predicted Market Regimes vs. True Regimes')

74plt.xlabel('Time Step')

75plt.ylabel('Regime')

76plt.legend()

77plt.grid(True)

78

79plt.tight_layout()

80plt.show()

81

82# Print learned parameters (optional)

83print("\nLearned Transition Matrix:")

84print(model.transmat_)

85print("\nLearned Means for each state:")

86print(model.means_)

87print("\nLearned Covariances for each state:")

88print(model.covars_)

89

90# --- 4. Actionable Strategy based on Regimes (Conceptual) ---

91# This part is conceptual as it depends heavily on the specific trading strategy.

92def get_strategy_for_regime(regime_id):

93 if regime_id == 0:

94 return "Implement long-only momentum in growth sectors (Tech, Healthcare)." # Based on model.means_[0]

95 elif regime_id == 1:

96 return "Shift to defensive assets, reduce leverage, or employ mean-reversion in high-volatility sectors." # Based on model.means_[1]

97 else:

98 return "Default/Neutral Strategy"

99

100print("\nExample of Strategy Adaptation based on last predicted regime:")

101last_predicted_regime = hidden_states[-1]

102print(f"Current predicted regime: {last_predicted_regime} ({'Regime 0' if last_predicted_regime == 0 else 'Regime 1'})")

103print(f"Recommended strategy: {get_strategy_for_regime(last_predicted_regime)}")

104Fourth, event-driven signal generation integrates earnings-specific insights. For example, an algorithmic system could monitor earnings releases for companies in leading sectors like Financials and Healthcare [3]. If a company like Galiano Gold [2] reports an earnings surprise, the system would analyze the magnitude of the surprise, the accompanying guidance, and the sentiment from the earnings call. This information, combined with the current macro regime, would then generate a trading signal. For instance, a positive earnings surprise in a growth sector during a "growth regime" might trigger a long position, while the same surprise in a "recession regime" might be viewed with more skepticism or even as a short-term fade opportunity.

Finally, adaptive portfolio construction and risk management tie everything together. The identified macro regime dictates the overarching portfolio allocation (e.g., higher exposure to equities in a growth regime, more bonds/cash in a recession). Within this allocation, event-driven signals from earnings provide tactical opportunities. Risk management parameters, such as position sizing, stop-loss levels, and leverage, are also adjusted dynamically based on the current regime's volatility and correlation characteristics. For example, during periods of elevated rates and macro crosscurrents, systematic strategies need robust algorithmic approaches to manage risk effectively [6]. This multi-layered approach allows algorithms to not only react to market events but to do so in a contextually aware manner, significantly enhancing their robustness and potential for alpha generation.

Implementation Considerations for Quant Traders

Implementing such a sophisticated algorithmic framework requires careful consideration of several practical aspects, ranging from data infrastructure to computational resources and model robustness. The pursuit of alpha through adaptive strategies is not without its challenges, and anticipating these can differentiate successful implementations from costly failures.

Firstly, data quality and latency are paramount. High-frequency earnings data, including real-time transcripts and sentiment feeds, must be integrated seamlessly. For macro indicators, access to reliable, frequently updated economic releases is critical. Any delay or inaccuracy in data can lead to stale signals and erroneous trades. Furthermore, the sheer volume of data, especially unstructured text from thousands of earnings calls, necessitates robust data pipelines and storage solutions. Quant traders must invest in enterprise-grade data infrastructure capable of handling terabytes of financial data, ensuring low-latency access for real-time decision-making. The challenge of navigating data scarcity for sector rotation strategies, as highlighted in [5], underscores the need for creative data sourcing and robust imputation techniques when explicit real-time data is unavailable.

Secondly, computational costs and infrastructure are significant. Training complex HMMs, large-scale NLP models, and backtesting adaptive strategies across various regimes demands substantial computational power. Cloud computing resources, with their scalable GPU and CPU capabilities, become indispensable. Furthermore, the operationalization of these models—deploying them in a production environment for real-time inference and signal generation—requires sophisticated low-latency trading systems. This includes robust monitoring, alerting, and failover mechanisms to ensure continuous operation and minimize downtime. The computational burden also extends to the continuous retraining and recalibration of models as market dynamics evolve, a necessary step to maintain their predictive power and adapt to new information.

Thirdly, model robustness and overfitting are constant concerns. While HMMs and machine learning models can identify intricate patterns, they are susceptible to overfitting, especially when dealing with noisy financial data and a limited number of distinct market regimes. Rigorous backtesting, cross-validation, and out-of-sample testing are non-negotiable. Strategies must be evaluated not just on their historical performance but on their ability to generalize to unseen market conditions. This often involves techniques like walk-forward optimization, Monte Carlo simulations, and stress testing against extreme market events. Moreover, the interpretation of model outputs, particularly the inferred regimes and their associated probabilities, requires a deep understanding of the model's limitations and assumptions. A purely black-box approach can lead to unexpected and potentially catastrophic outcomes.

Finally, dynamic risk management is integral to the success of adaptive strategies. As the market transitions between regimes, the optimal risk profile of a portfolio changes. A strategy that performs well in a low-volatility, growth-oriented regime might incur significant losses in a high-volatility, recessionary environment. The algorithmic framework must dynamically adjust position sizing, leverage, and stop-loss levels based on the current inferred regime and prevailing market conditions, such as elevated interest rates [6]. This requires a sophisticated risk engine that can integrate regime-specific volatility, correlation, and drawdown expectations into its decision-making process, ensuring that the strategy's overall risk remains within acceptable bounds even as its underlying components adapt.

Key Takeaways

- ▸ Adaptive Strategies are Essential: The 2026 market, characterized by significant sector rotation and macro shifts, necessitates algorithmic strategies that can dynamically adapt to changing regimes [1, 3, 7].

- ▸ Regime Detection is Foundational: Hidden Markov Models (HMMs) provide a robust framework for identifying distinct market regimes (e.g., growth, inflation, recession) based on observable economic and market data [6].

- ▸ Earnings Data Fuels Event-Driven Alpha: Algorithmic interpretation of earnings reports, including quantitative analysis of surprises and qualitative assessment of transcripts (NLP), is crucial for generating tactical alpha opportunities [2, 4].

- ▸ Multi-Layered Approach: Combining macro regime detection with granular, event-driven signals from earnings creates a powerful, context-aware algorithmic trading system.

- ▸ Data Infrastructure is Critical: High-quality, low-latency data feeds for both structured financial data and unstructured textual data are non-negotiable for effective implementation.

- ▸ Computational Resources are Demanding: Training and deploying complex HMMs and NLP models, along with extensive backtesting, require significant computational power and robust infrastructure.

- ▸ Rigorous Validation and Dynamic Risk Management: Overfitting is a major risk; strategies must be rigorously backtested and stress-tested, with risk parameters dynamically adjusted based on the current market regime to ensure robustness.

Applied Ideas

The frameworks discussed above are not merely academic exercises — they translate directly into deployable trading logic. Here are concrete next steps for practitioners:

- ▸Backtest first: Validate any regime-detection or signal-generation approach with walk-forward analysis before committing capital.

- ▸Start small: Deploy with fractional position sizing and paper-trade for at least one full market cycle.

- ▸Monitor regime shifts: Set automated alerts for when your model detects a regime change — manual review before large rebalances is prudent.

- ▸Iterate on KPIs: Track Sharpe, Sortino, max drawdown, and win rate weekly. If any metric degrades beyond your predefined threshold, pause and re-evaluate.

- ▸Combine signals: The strongest edges come from combining uncorrelated signals — pair the ideas in this post with your existing alpha sources.

Sources & Research

7 articles that informed this post

2026 Macro Regime: Algorithmic Strategies Target Growth Sectors Amidst Divergent Earnings

Read article

Algorithmic Lens on Galiano Gold's Q1 Earnings for Event-Driven Strategies

Read article

Q1/Q4 2026 Earnings Reveal Sector Rotation: Quant Strategies Target Financials & Healthcare Leadership

Read article

Algorithmic Edge: Unpacking Q1/Q4 2026 Earnings for Event-Driven Strategies

Read article

Algorithmic Sector Rotation: Navigating Data Scarcity with Economic Cycle Models

Read article

Navigating Elevated Rates: Algorithmic Strategies for Macro Crosscurrents

Read article

Algorithmic Sector Rotation: Capitalizing on Tech & Growth Resurgence Amidst Defensive Weakness

Read articleFrom Theory to Practice

The concepts discussed in this article are exactly what we build into our products at QuantArtisan.

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# Set a random seed for reproducibility

np.random.seed(42)Found this useful? Share it with your network.